15 things AI can (and can't) do

Artificial intelligence is a technology built and programmed to assist computer systems in mimicking human behavior. Algorithm training informed by experience and iterative processing allows the machine to learn, improve, and ultimately use human-like thinking to solve complex problems.

Although there are several ways computers can be "taught," reinforcement learning—where AI is rewarded for desired actions and penalized for undesirable ones, is one of the most common. This method, which allows the AI to become smarter as it processes more data, has been highly effective, especially for gaming.

AI can filter email spam, categorize and classify documents based on tags or keywords, launch or defend against missile attacks, and assist in complex medical procedures. However, if people feel that AI is unpredictable and unreliable, collaboration with this technology can be undermined by an inherent distrust of it. Diversity-informed algorithms can detect nuanced communication and distinguish behavioral responses, which could inspire more faith in AI as a collaborator rather than just as a gaming opponent.

Stacker assessed the current state of AI, from predictive models to learning algorithms, and identified the capabilities and limitations of automation in various settings. Keep reading for 15 things AI can and can't do, compiled from sources at Harvard and the Lincoln Laboratory at MIT.

Can: Be trained and 'learn'

AI combines data inputs with iterative processing algorithms to analyze and identify patterns. With each round of new inputs, AI "learns" through the deep learning and natural language processes built into training algorithms.

AI rapidly analyzes, categorizes, and classifies millions of data points, and gets smarter with each iteration. Learning through feedback from the accumulation of data is different from traditional human learning, which is generally more organic. After all, AI can mimic human behavior but cannot create it.

Cannot: Get into college

AI cannot answer questions requiring inference, a nuanced understanding of language, or a broad understanding of multiple topics. In other words, while scientists have managed to "teach" AI to pass standardized eighth-grade and even high-school science tests, it has yet to pass a college entrance exam.

College entrance exams require greater logic and language capacity than AI is currently capable of and often include open-ended questions in addition to multiple choice.

Can: Perpetuate bias

The majority of employees in the tech industry are white men. And since AI is essentially an extension of those who build it, biases can (and do) emerge in systems designed to mimic human behavior.

Only about 25% of computer jobs and 15% of engineering jobs are held by women, according to the Pew Research Center. Fewer than 10% of people employed by industry giants Google, Microsoft, and Meta are Black. This lack of diversity becomes increasingly magnified as AI "learns" through iterative processing and communicating with other tech devices or bots. With increasing incidences of chatbots repeating hate speech or failing to recognize people with darker skin tones, diversity training is necessary.

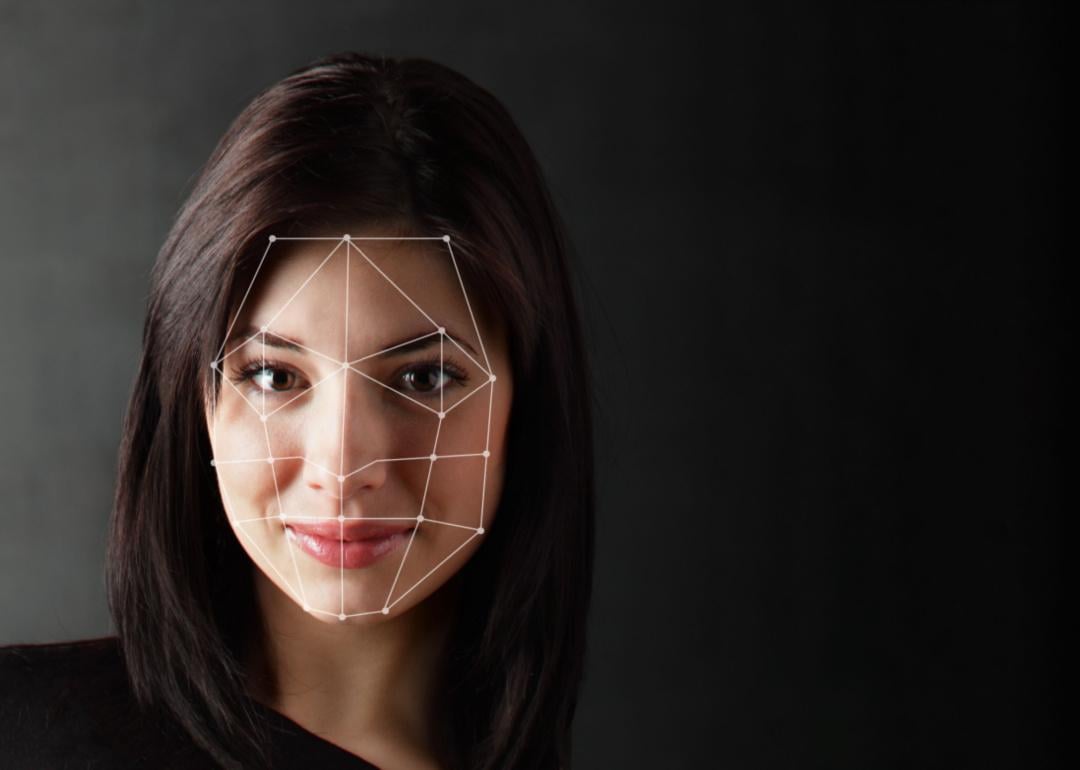

Can: Identify images and sounds

Unstructured data like images, sounds, and handwriting comprise around 90% of the information companies receive. And AI's ability to recognize it has almost unlimited applications, from medical imaging to autonomous vehicles to digital/video facial recognition and security. With the potential for this kind of autonomous power, diversity training is an imperative inclusion in university-level STEM pedagogy—where more than 80% of instructors are white men— to enhance diversity in hiring practices and in turn, in AI.

Cannot : Drive a car

Even with so much advanced automotive innovation, self-driving cars cannot reliably and safely handle driving on busy roads. This means that AI tech for passenger cars is likely a long way off from full autopilot. Following a number of accidents, the industry is focusing on testing and development rather than pushing for full-scale commercial production.

Cannot: Judge beauty contests

Beauty.ai programmed three different algorithms to measure symmetry, wrinkles, and youth in a beauty contest judged by an AI system. While the machines were not programmed to select skin color as part of the beauty equation, almost all of the selected 44 winners were white. No algorithms were programmed to detect melanin or darker skin as a component.

Can: Read and classify text and documents

With most incoming information being unstructured data, companies employ AI programmed with DL and NLP to categorize and classify texts and documents.

One common example is Google's Gmail algorithm which sorts out spam. Another example of AI filtering incoming unstructured data is Facebook's hate speech detection feature. However, AI tends to struggle to detect nuance so humans usually have to review AI-flagged content. Sentiment algorithms informed by diversity and inclusivity training are needed to detect cultural contexts.

Cannot: Watch (and understand) a soccer match

AI can be described as brittle, meaning it can break down easily when encountering unexpected events. During the isolation of COVID-19, one Scottish soccer team used an automatic camera system to broadcast its match. But the AI camera confused the soccer ball with another round, shiny object — a linesman's bald head.

Can: Flip burgers

Flippy is an AI assistant that is flipping burgers at fast food chains in California. The AI relies on sensors to track temperature and cooking time. However, Flippy is designed to work with humans rather than replace them. Eventually, AI assistants like Flippy will be able to perform more complicated tasks—but they won't be able to replace a chef's culinary palate and finesse.

Can: Make investments

Smarter computers make smarter investments, since without the emotional biases of human traders, AI-driven trading has increased financial returns.

Investing algorithms are driven by reinforcement learning, which analyzes hundreds of millions of data points to calculate the investment with the highest reward. TD Ameritrade rolled out a voice-activated platform via Amazon's Alexa. People can tell Alexa to buy or sell while cooking dinner or driving in the car. One inherent bias here is that highly automated economies are more "successful" than emerging economies. So, based on AI's loss-aversion investment strategies, the machine could choose to invest in highly automated economies, which in turn could contribute to greater wealth disparity and actually stagnate economic growth.

Cannot: Help itself from buying things

In 2017, a Dallas six-year-old ordered a $170 dollhouse with one simple command to Amazon's AI device, Alexa. When a TV news journalist reported the story and repeated the girl's statement, "...Alexa ordered me a dollhouse," hundreds of devices in other people's homes responded to it as if it were a command.

As smart as this AI technology is, Alexa and similar devices still require human involvement to set preferences to prevent voice commands for automatic purchases and to enable other safeguards.

Can: Raise billions of cockroaches on a farm

China's pharmaceutical companies rely on AI to create and maintain optimal conditions for their largest cockroach breeding facility. Cockroaches are bred by the billions and then crushed to make a "healing potion" believed to treat respiratory and gastric issues, as well as other diseases.

Cannot: Be creative

People fear that a fully automated economy would eliminate jobs, and this is true to some degree: AI isn't coming, it's already here. But millions of algorithms programmed with a specific task based on a specific data point can never be confused with actual consciousness.

In a TED Talk, brain scientist Henning Beck asserts that new ideas and new thoughts are unique to the human brain. People can take breaks, make mistakes, and get tired or distracted: all characteristics that Beck believes are necessary for creativity. Machines work harder, faster, and more—all actions that algorithms will replace. Trying and failing, stepping back and taking a break, and learning from new and alternative opinions are the key ingredients to creativity and innovation. Humans will always be creative because we are not computers.

Can: Clean your teeth

Learning from sensors, brush patterns, and teeth shape, AI-enabled toothbrushes also measure time, pressure, and position to maximize dental hygiene. More like electric brushes than robots, these expensive dental instruments connect to apps that rely on smartphone's front-facing cameras.

Can: Pollinate flowers

Plan Bee is a prototype drone pollinator that mimics bee behavior. Anna Haldewang, its creator, made the unusual-looking yellow and black AI education device to spread awareness about bees' roles as cross-pollinators and their significance in our food system. Other companies have also found ways to use AI for pollination and some are using it to improve bee health, as well.